The Infrastructure Pivot

Why the "Chatbot Era" is Over and the "Agentic Era" Has Begun

The Productivity Paradox of 2026

Last month, I found myself sitting across a polished mahogany table from a senior executive who looked for all the world like a man who had just purchased a Ferrari only to discover, upon popping the hood, that it was powered by a lawnmower engine. He was what we might call a “Model Adopter,” the kind of conscientious leader who had done absolutely everything right by the playbook of 2024. He had secured the expensive enterprise licenses, mandated the requisite “Prompt Engineering 101” workshops for his teams, and even enthusiastically established a dedicated Slack channel for his staff to celebrate their “AI Wins.” Yet, as he slid his laptop across the table to show me the fruits of this expensive labor, the air in the room was not filled with triumph, but with a profound, heavy confusion.

He pointed to a perfectly adequate, if slightly robotic, email generated by his expensive Co-Pilot. It was grammatically correct, structurally sound, and utterly devoid of any strategic spark. “I spent half a million dollars this year on this transformation,” he said, his voice dropping to a whisper that seemed to suck the oxygen out of the room. “Is this really all it does?” He wasn’t just asking about the email, of course. In that moment of candid frustration, he was giving voice to the question that has begun to haunt every boardroom, faculty meeting, and budget committee as we settle into the reality of 2026.

Where is the ROI?

For three years, we have been captivated by the promise that Generative AI would trigger an explosion of productivity comparable to the steam engine or the internet. We bought into the vision, purchased the tools, trained our people, and drafted the policies. And yet, when we look at the balance sheets of the vast majority of organizations—universities, non-profits, and enterprises alike—the needle on aggregate productivity has barely moved. In fact, if you look closely at the operational friction in many departments, it has actually dipped.

This stagnation is not a failure of the technology itself, which remains a marvel. It is a failure of our metaphor.

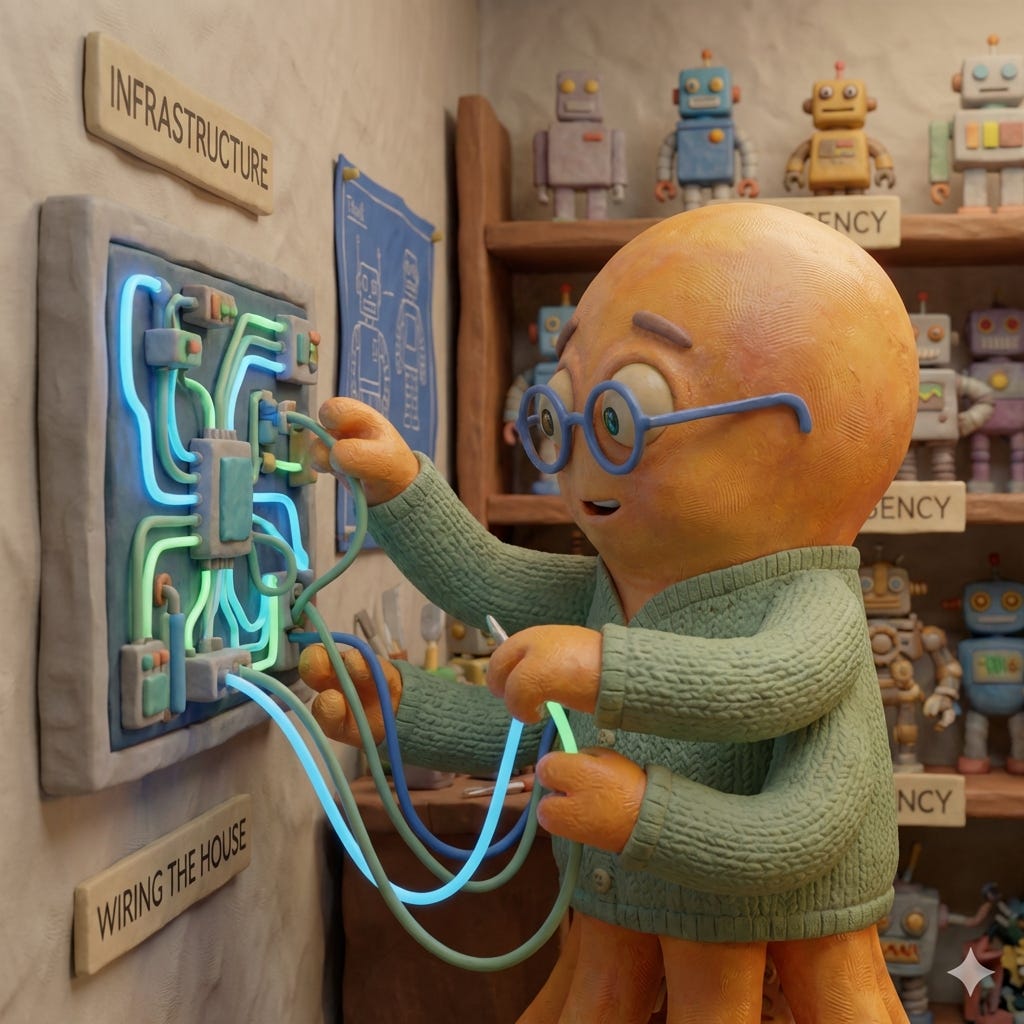

We have been treating AI like a Tool—a fancy hammer or a shiny new appliance that we hand to individual workers to help them hit nails faster or toast bread quicker. But AI is not the appliance. It is the Electricity. It is the Infrastructure that powers the entire kitchen. A tool adds linear value to a specific task; you hit the nail, the nail goes in. Infrastructure, however, enables a profound, positive flexibility. It is the invisible grid that allows any number of diverse, high-voltage applications—from the refrigerator to the microwave to devices we haven’t even invented yet—to function simultaneously and reliably. By obsessing over the tools (the chatbots) while ignoring the infrastructure (the data pipelines, the governance code, the agentic architecture), we have effectively bought a thousand high-end appliances for a house with no wiring. Until we do the hard, invisible work of upgrading the grid, we will remain stuck in the “Valley of Despair,” surrounded by potential power but unable to turn it on.

Definition: The 2026 Operations Pivot

The Operations Pivot is the strategic transition from “individual user adoption” (employees using chatbots) to “systemic agentic deployment” (organizations building autonomous workflows). It marks the end of the “PROMPT” era and the beginning of the “ORCHESTRATION” era.

This article is your blueprint for that transition. Now, I know what you’re thinking. “Infrastructure Governance” and “Agentic Architecture” don’t exactly roll off the tongue like the lyrics to a summer anthem. They sound a bit like... well, like work. But I want to challenge you to look closer. These aren’t just dry technical categories; they are the compass points for a profound liberation. We are going to synthesize insights from these four critical domains—weaving together the structural rigorousness of Infrastructure Governance and Agentic Architecture with the human vitality of Pedagogical Innovation and Economic Theory—to map a road out of this valley. It is a path that leads away from the exhaustion of the “Verification Tax” and toward a future where our systems finally possess the dignity to work quietly, reliably, and autonomously on our behalf.

Part 1: The Diagnosis (Why We Are Stuck)

To understand why we are stuck, we must stop guessing and look at the economics. Most AI failures aren’t caused by bad code; they are caused by a phenomenon known as the Productivity J-Curve.

When you move AI from a “sandbox” experiment to enterprise-scale integration, productivity doesn’t rise; it crashes. As identified by economists Erik Brynjolfsson, Daniel Rock, and Chad Syverson, the introduction of transformative technology initially suppresses performance while an organization invests in “intangible assets” like process redesign and reskilling. During this Valley of Despair (typically months 4–12), data reveals a staggering 30% drop in workforce morale.

Definition: The Double Work Paradox

The Double Work Paradox is the inevitable period during systemic transition where an organization must expend resources to maintain a deterministic legacy system while simultaneously investing in the construction of a probabilistic agentic system. It is the cost of running two companies at the same time.

This paradox manifests in two distinct forms of “Tax” that drain organizational energy: The Maintenance Tax and The Verification Tax.

The Maintenance Tax (The Cost of Parallel Systems)

The Maintenance Tax is the operational cost of keeping the old world alive while the new world is being born. It is the friction of duality. Until the new system is robust enough to fully take over, your team is forced to straddle two incompatible realities.

The Operational Split (Enterprise): Consider your customer service team. They are currently training a new “Tier 1 Support Agent” (AI). But because the Agent is only 80% reliable, they cannot release it. So, they must actively work the queues (answering phones, closing tickets) while also tagging thousands of conversation datasets to train the model. They are doing their job, and they are also doing the job of the data scientist, for the same salary, in the same 8-hour day.

The Non-Profit Split (Development): Your development director is trying to modernize donor relations. Ideally, an AI would draft personalized updates for 5,000 micro-donors. But the legacy CRM is full of messy, duplicate data (”John Smith” vs “J. Smith”). The director must now manually clean the database to prevent the AI from hallucinating relationships, while still handwriting the critical high-value thank you notes to ensure revenue doesn’t drop during the transition.

The Educational Split (University): A faculty member is asked to “innovate” by designing renewable, AI-integrated assignments. But the university’s accreditation body and the department’s tenure committee still measure success by the “Standard Essay.” The professor must therefore design the new, risky curriculum while simultaneously grading the old, safe one to ensure metrics don’t drop. They are teaching two courses for the price of one.

This Maintenance Tax is exhausting, but unlike the misty confusion of the “ROI question,” it is a known quantity with a clear solution: Strategic Scaffolding. The error most leaders make is assuming this tax can be paid out of their team’s existing energy reserves. It cannot. If you ask a team to maintain 100% of their old output while simultaneously building a new 20% workflow, you are not “innovating”; you are engineering burnout. You cannot simply demand the transition; you must build the scaffolding that makes the transition survivable.

This begins with the 30% Rule. Effective implementation requires setting aside 25-30% of your total AI budget specifically for change management and psychological support. This is not “fluff.” The primary friction in the J-Curve is not technical; it is emotional. It is the fear of obsolescence combined with the frustration of double work. This budget funds the extensive training, the “safe harbor” workshops where failure is normalized, and the communication campaigns required to remind the team that the dip in productivity is an investment, not a failure.

Finally, you must invest in Transition Labor. The most dangerous misuse of talent during this phase is forcing your best architects to push brooms. If your most visionary developers or curriculum designers are bogged down in the manual “clean-up” of the legacy system, they cannot build the future. The strategic move is to hire temporary staff or contractors to handle the “Legacy Workflow”—keeping the lights on and the data clean—specifically to free up your core team to focus entirely on the “AI Workflow.” You are buying them the time and mental space to construct the new infrastructure.

The Verification Tax (The Cost of Probabilistic Truth)

The Maintenance Tax, while exhausting, is at least a familiar beast. It is a renovation problem. We know how to solve it with budget and temporary labor. The Verification Tax, however, is an entirely different species of predator, and it is the primary reason why standard “change management” playbooks are failing.

When you upgrade a team from Excel to Tableau, you pay an “upskilling tax.” The team must learn a new interface. But once they learn it, the tool is a faithful servant. It is deterministic: if the input formula is correct, the output chart is demonstrably true. You do not have to check if Tableau is “hallucinating” a sales trend. It does exactly what it is told, every single time.

AI is not deterministic. It is probabilistic. It is not a calculator; it is a dreamer.

This means you cannot treat AI adoption as a simple “upskilling” problem. It does not matter how well you train your team on “prompt structure”; the underlying system remains fundamentally stochastic. This introduces a permanent, non-negotiable layer of friction that no other corporate tool has ever imposed: The Audit. Every output generated by an AI—whether it’s a marketing email, a snippet of code, or a student’s paper—must be treated as a hypothesis, not a fact. We have therefore replaced the labor of creation (writing the email) with the labor of verification (investigating the email).

The Copywriter’s Trap: A junior marketer can generate 50 blog post ideas in a minute. But a senior editor must now read and fact-check 50 blog posts. The “production” time went down, but the “verification” time skyrocketed, often creating a bottleneck at the most expensive layer of talent.

The Professor’s Dilemma: In the past, plagiarism was rare and obvious. Now, it is ubiquitous and subtle. The professor shifts from “grader” to “forensic analyst,” spending hours scanning text for the “hallucinated citations” typical of AI.

We have added a new layer of cognitive load without removing any of the old execution load. But to call this “multi-tasking” is to miss the severity of the shift. We are not asking people to do more of the same work; we are asking them to do a fundamentally different type of work.

Metric-based organizations hire people to be Foremen, not Architects. A foreman is valuable because they can execute a plan with precision and speed. They know how to lay the bricks (write the code, draft the email) without asking questions about the physics of the wall. An architect, however, must understand the structural integrity of the entire building. When we introduce Generative AI, we are effectively telling our foremen, “Stop laying bricks. Instead, I want you to look at this blueprint generated by a machine and tell me if the house will fall down in ten years.”

We are forcing execution-tier workers to become strategy-tier auditors overnight.

The obvious operational answer would be to swap the talent: fire the foremen and hire architects. But the Maintenance Tax makes this impossible. We cannot let go of the people who know how the legacy system works, because the legacy system is the only thing currently generating revenue. We are stuck in a talent bind: we need the “builders” to keep the lights on, but we need those same builders to develop the critical distance of the “verifier” to manage the AI.

In Higher Education and L&D departments, the knee-jerk reaction to this dilemma is to say, “We need to teach Critical Thinking.”

I chafe at this term. It is a lazy piece of administrative shorthand, a suitcase word into which we pack everything we hope our students and employees will magically become. It is too vague to be actionable. Telling a junior developer or a freshman student to “think critically” about an AI output is like telling a drowning man to “swim better.” It offers no technique.

What we are actually asking for is something far more specific and difficult to train: Evaluative Judgment.

Evaluative Judgment is not just general skepticism. It is the ability to diagnose the quality of an output without having generated it yourself. It requires a deep, almost instinctual understanding of what “good” looks like, independent of the labor of creation. For professionals who have spent their entire careers defining their value by making things, shifting to a role defined by judging things is not an upgrade; it is an identity crisis. Until we name this specific cognitive load—and build the scaffolding to support it—our teams will continue to drown in the gap between the Foreman and the Architect.

Part 2: The Cure (From Pilots to Pipelines)

Even if we can help with training people to engaage their work with better evaluative judgment, the specific technical cure for the J-Curve requires a transition to Agentic Architecture. However, we must be careful not to make the mistake of thinking this is a binary switch. We often talk about “Chatbots” vs. “Agents” as if they are two distinct species, separated by a massive chasm. In reality, the transition is a gradient. It is an evolution, not a replacement.

I have mapped this evolution as nine distinct stages of AI maturity, moving from the basic “Zero-Shot Chatbot” we all used in 2023 to the “Autonomous Strategic Agent” of 2026. Between these two poles lies a vast spectrum of capability. As we move up the ladder, we don’t just get “smarter” text; we unlock distinct features like Memory (the ability to recall past preferences), Tool Use (the ability to browse the web or query a database), and Reflection (the ability to critique one’s own plan before executing it).

The difference between a toy and a teammate is Agency, but agency is a volume knob, not a light switch. In the early stages, the system has low agency; it waits for you to drive. In the later stages, it has high agency; it drives while you sleep. The critical insight for leaders is that not every task requires Stage 9 autonomy. You don’t need a strategic agent to set a timer, just as you don’t need a self-driving car to verify if you locked the front door. “Right-sizing” the agency to the task is how we avoid over-engineering simple problems.

Consider the “Chatbot” workflow (Stage 1). In this model, the human act as the router for every single micro-step. You ask the bot to draft an email. You read it. You edit it. You copy it. You open Outlook. You paste it. You double-check the recipient. You hit send. The AI has done the “creation,” but you have done the “integration.” The bot is an island, and you are the bridge.

Now, consider the “Agentic” workflow (Stage 5+). You do not ask for an email; you define a goal: “Schedule a kick-off meeting with Sarah for next week.” The Agent does not just give you text; it leaves the chat window. It uses a tool (an API) to check your calendar. It uses another tool to check Sarah’s calendar. It proposes a time. It sends the invite. It updates your CRM. You do nothing. You have successfully deleted the “integration” labor entirely.

Definition: Human-on-the-loop

Human-in-the-loop: The human is a critical component of the machine’s operation (e.g., a driver steering a car). If the human stops, the process stops.

Human-on-the-loop: The human acts as a supervisor or governor (e.g., a pilot monitoring an autopilot). The machine executes independence; the human only intervenes to set goals or correct drift.

This shift from “Human-in-the-loop” to “Human-on-the-loop” is the economic unlock. It breaks the Verification Tax by balancing the equation. We accept that “The Audit” is a heavy, high-cognition task (pricing it in), but we offset that cost by deleting the low-cognition labor of execution. You spend more brainpower verifying the meeting time, but you save the ten minutes of back-and-forth emailing. The net result is a gain in strategic focus.

But there is a catch. To sustain a “Human-on-the-loop” model, the agent must be reliable enough to be ignored for long stretches. And right now, they aren’t.

This brings us to the counter-intuitive reality of 2026: Agents are fragile. This surprises people. We assume that because AI is “smart,” it must be robust. But while a Chatbot is resilient (if it hallucinates a fact in a poem, nobody dies), an Agent is brittle because it must interact with the rigid, unforgiving world of software APIs and databases.

The first source of fragility is the Collision of Logic. Agents operate using probabilistic decisions (fuzzy logic), but they must interact with systems that require deterministic accuracy (strict logic). An LLM is a prediction engine; it deals in likelihoods. A software API is a rule engine; it deals in absolutes. If a chatbot is 99% confident it has found the right file to summarize, the 1% error margin is just a bad summary. But if an agent is 99% confident it has found the right server configuration to delete, that 1% error margin is a catastrophic outage. We are essentially trying to drive a train (rigid, deterministic) with a sail (fluid, probabilistic). The wind implies direction, but the rails demand precision.

The second source of fragility is Context Collapse. A human employee operates within a vast, invisible web of “common sense” that dictates how work actually gets done. They know not to email a client at 3 AM. They know not to use a jovial emoji in a condolence note. They know that “ASAP” from the CEO means “now,” but “ASAP” from a peer might mean “tomorrow.” An agent, unless explicitly trained on these nuances, sees only the raw goal: “Send Email.” It lacks the social and temporal wrapper that protects our workflows from friction. Without these guardrails, an autonomous agent is a sociopath with a keyboard—hyper-efficient, but socially destructive.

Because they are fragile, agents cannot survive on “Policy” alone. You cannot write a PDF rulebook that tells an AI, “Don’t be hallucinate.” You must build the Infrastructure—the technical guardrails—that makes it physically impossible for the agent to derail. To function safely, they do not need rules; they need rails.

The New Governance: Trust is Code, Not Paper

This fragility creates a governance crisis. If an agent is a “sociopath with a keyboard”—hyper-efficient but lacking common sense—how do we trust it?

For the last two years, our strategy has been “The Acceptable Use Policy” (AUP). We write a PDF document telling employees, “Don’t put proprietary data into ChatGPT,” and we hope they read it. This approach relies on the assumption that the user is a human who can be held accountable. But in an agentic workflow, the user is software. You cannot hand an employee handbook to an algorithm. An agent does not “care” about your policy; it only cares about its goal.

To rely on a PDF policy in an agentic world is “Audit Washing.” It is governance theater—a performance designed to make auditors feel safe without actually reducing risk. It is an attempt to use a “speed limit sign” (policy) to stop a runaway train.

In the Agentic Era, governance must be hard-wired into the stack. We are moving from a model of Governance as Policy to a model of Governance as Code.

Definition: Governance as Code

Governance as Code is the shift from “social controls” (trusting users to follow policy) to “infrastructure controls” (restricting machines via code). It treats the agent not as a trusted employee, but as a potentially dangerous utility. Instead of asking the agent to “be careful,” we give it an API key that physically lacks the permission to destroy data. We don’t ask electricity to “be safe”; we insulate the wires.

For many leaders in education and the non-profit sector, this feels cold. When we hear “governance,” we think of mission statements, board votes, and shared governance committees. These are political processes designed to align human values. But applying political governance to an agent is a category error. An agent is not a colleague; it is a utility. It is closer to the electricity running through your walls than to the faculty member sitting in your senate. You do not govern a power grid with a mission statement; you govern it with circuit breakers, fuses, and insulators. To survive the Agentic Era, we must stop trying to negotiate with our software and start engineering the constraints that make it safe to use.

But what does “insulating the wires” look like in practice? It moves us away from vague ethical debates and toward concrete engineering decisions. If you are building an AI strategy today, you cannot wait for the dust to settle. You must prioritize the following five infrastructure pillars immediately. These are not optional “features”; they are the structural requirements that effectively separate a helpful tool from a catastrophic liability.

1. Zero-Knowledge Machine Learning (ZKML)

The Problem: How do we let an agent analyze our financial data without exposing that data to a public model provider?

The Solutions: ZKML. This cryptographic protocol allows a model to verify a claim about data (e.g., “This borrower is credit-worthy”) without ever “seeing” the underlying data itself.

Governance as Code: We don’t ask the vendor to “promise” they won’t steal our data. We use math to make it impossible for them to see it.

2. The Model Context Protocol (MCP)

The Problem: Every time we connect an agent to a new database, we have to write custom code, creating new security vulnerabilities.

The Solution: MCP acts as the “USB port” for AI. It allows an agent to “plug in” to local data (Google Drive, Slack, SQL) in a standardized, secure way.

Governance as Code: We don’t rely on developers to remember security best practices for every new integration. We enforce a single, standardized security layer for every connection.

3. Local Sovereignty (Edge AI & SLMs)

The Problem: Sending every process to a massive cloud model (like GPT-4) is expensive, slow, and leaks privacy.

The Solution: Running “Small Language Models” (SLMs) directly on local devices or on-premise servers (Edge AI).

Governance as Code: We don’t create a policy saying “Don’t upload sensitive HR data.” We simply route HR tasks to a local model that quite literally cannot access the internet. The privacy is enforced by the laws of physics, not the laws of HR.

4. Agentic Identity (Service Accounts & RBAC)

The Problem: If an agent acts on behalf of a user, does it have all of that user’s permissions? Can it email the CEO?

The Solution: Giving agents their own distinct “Service Accounts” with Role-Based Access Control (RBAC). An agent is never given “admin” access; it is given “least privilege” access necessary for its specific job.

Governance as Code: We don’t trust the agent to “stay in its lane.” We issue it a digital badge that only opens specific doors. If it tries to open the “Payroll” door, the door simply remains locked.

5. Automated Circuit Breakers (The Kill Switch)

The Problem: Agents can get stuck in loops, spending thousands of dollars or emailing thousands of people in minutes.

The Solution: Hard-coded limits on behavior—rate limits (max 50 emails/hour) and simple budget caps (max $20/day).

Governance as Code: We don’t wait for a human manager to notice the agent is acting crazy. The infrastructure automatically cuts the power the moment the agent exceeds its defined parameters, preventing a glitch from becoming a scandal.

Part 3: The Pedagogical Mirror (The University as a Studio)

Nowhere is the pain of the “Tools mindset” more acute than in Higher Education, primarily because our entire system was architected for a different century. We are still operating on the Industrial Model established in the early 1900s—a system designed to standardize human cognition for a factory economy. In that world, information was scarce, and the ability to produce text was a reliable proxy for cognitive discipline.

For three years, we have been trapped in a “Cheating Arms Race” because we are insisting on running this 20th-century factory in an era of infinite supply. The Factory Model relies on a simple contract: the student proves they did the work by producing a standardized artifact (the essay). The professor verifies the labor by grading the artifact. But in an agentic world, the artifact is free. If an AI can generate a B-plus essay in 5 seconds, the artifact no longer proves human competence. When we try to solve this by using AI detectors (more tools), we are simply paying the Verification Tax—spending hours policing a product that is no longer scarce.

The escape route mirrors the corporate transition. We must move from the Factory (policing the product) to the Studio (curating the process).

Definition: The Renewable Assignment

The Renewable Assignment is an artifact that adds value to the world, rather than ending up in a trash can. Unlike a “Disposable Assignment” (written for an audience of one, graded, and discarded), a renewable assignment interacts with real-world data, community partners, or open repositories. It requires the student to act not as a simulator of expertise, but as a practitioner of it.

This distinction is counter-intuitive. We tend to think of the “Factory Model” as the peak of infrastructure—after all, it was the steam engine and electricity that made the industrial factory possible. Why does this new infrastructure (AI) break the factory instead of turbocharging it?

The difference is in what is being automated. 20th-century infrastructure automated muscle, which allowed the factory to standardize production. But the Educational Factory uses cognitive effort (writing the essay, solving the equation) as its primary metric of verification. When you introduce an infrastructure that automates thought, you destroy the metric. In the factory, using AI is “cheating” because it bypasses the effort we are trying to measure.

But in the Studio Model, the goal is not to prove effort; the goal is to create impact. In a design studio, using a power tool isn’t cheating; it’s leverage. In the Studio Model, AI ceases to be a threat to integrity and becomes the infrastructure that makes higher-order work possible.

Consider the difference:

The Factory Assignment: “Write a 5-page paper on local water policy.” This tests if the student can synthesize text—a task the AI dominates.

The Studio Assignment: “Partner with the local water board to analyze their 2024 public dataset and build a visualization dashboard for their website.”

This structural shift changes the fundamental equation of the classroom. A political science sophomore cannot code a dashboard alone; the technical barrier is too high. But a political science sophomore plus an Agentic Coding Assistant can. By treating AI as infrastructure, we raise the ceiling of what is possible for a novice to achieve. We move the assessment from “Did you write this sentence?” (a low-value proxy) to “Did you solve this problem?” (high-value reality). We stop asking students to simulate work, and start asking them to do work.

However, this transition is terrifying for the faculty member because it introduces unpredictability. In the Factory Model, the professor holds the “Answer Key.” They know what a B-plus paper looks like before it is written. In the Studio Model, the problem is real, meaning the solution is unknown. The public dataset might be messy; the water board might change their requirements; the agent might hallucinate a library that doesn’t exist. There is no answer key for reality. This loss of control forces the professor to abandon the safety of the podium and enter the mud with the student.

The Faculty Shift: From Policeman to Curator

The move to renewable assignments is not just a change in topic; it is the implementation of a Human-on-the-loop management structure in the classroom. In this model, the student acts as the “manager” of the AI, setting goals, defining parameters, and verifying outputs. The professor, in turn, acts as the “executive,” reviewing not just the final product, but the student’s management strategy.

I want to pause here and acknowledge that this shift can feel like an existential crisis. If the course is no longer in the business of forcing students to produce artifacts (writing the code, drafting the essay), then what exactly is it producing? For a century, we have equated “learning” with “making.” If the machine does the making, it is natural to worry that we have hollowed out the learning.

This feeling of “abdication of duty” is real, and it is valid. It comes from a deep commitment to student growth. We worry that by allowing AI to handle the “grunt work” of syntax and structure, we are bypassing the struggle that builds character. If “Content is King,” and the AI can generate infinite content, then it feels like the King is dead.

But we must be gentle with our own definitions of “Content.” In the 20th century, content was Information (names, dates, formulas) and Synthesis (arranging that information into a coherent argument). Today, Information is free and Synthesis is cheap. If we design courses solely to test the ability to retrieve information and synthesize it into a standardized format, we are testing a commodity skill. We are training students for a world that no longer exists.

The new scarcity is not generation; it is comparison. The value has shifted from the ability to create to the ability to discern.

This brings us to the core competency of the AI Studio: Evaluative Judgment.

Definition: Evaluative Judgment

Evaluative Judgment is the capability to make informed decisions about the quality of work—both one’s own and that of others. Originally defined by researchers like Joanna Tai et. al., it shifts the goal of higher education from “pleasing the teacher” to “internalizing the standard of quality.” In the age of AI, this is the ultimate safeguard. If a student cannot distinguish between a hallucination and a fact, or between a mediocre draft and a polished insight, they are not a user of AI; they are a victim of it.

I won’t lie to you: Teaching this is a harder job than grading. When you grade an essay, you can stay clean; you sit at your desk with a red pen and judge the final output. To grade judgment, you effectively have to get in the mud with the student. You have to understand the tools as well as they do. You have to embrace the messiness of iteration. But deeper down, I think we all know that this “mud” is where the actual learning has always happened.

This is the only skill that survives graduation. In a professional environment where AI can generate infinite drafts, the employee who wins is not the one who can write the fastest, but the one who can look at five AI-generated options and say, “That one. That is the one that matches our brand voice and solves the client’s problem.” This is what it means to take ownership of a result you didn’t fully create.

For mission-driven organizations and liberal arts colleges, this is where the conversation about “Academic Integrity” must go. It is no longer just about following rules; it is about taking responsibility for knowledge in the world. When you ask a student, “Have you developed the internal standard of quality necessary to sign your name to this work?” you are asking a moral question. You are asking them to refuse to abdicate their agency to the machine. You are teaching them to say: “I am responsible for this. This is mine, even if the way it came to be was through managing an AI system.”

Part 4: The New Operating Manual (The AI Studio Model)

So, what does this look like on Monday morning?

Whether you are a University Dean or a CEO, the organizational structure you need to build is the AI Studio.

The AI Studio is not an “IT Support Ticket” desk. It is a centralized hub of expertise that functions like an internal consultancy. Its mandate is not “adoption” (getting everyone to use AI) but “construction” (building the agents that do the work).

In a decentralized world, the default state of AI adoption is entropy. Without a center of gravity, your organization will fragment into a thousand rogue pilots. Marketing will build a “Copywriting Bot” on ChatGPT Team; Finance will build a “Forecasting Agent” on a local Llama instance; HR will inadvertently upload sensitive data to a random PDF summarizer. This doesn’t just create security leaks—though it certainly does that—it creates a Tower of Babel. You end up with fifty different “meeting summarizers” that don’t talk to each other, don’t share memory, and don’t adhere to the same safety rails. The AI Studio exists to prevent this fragmentation by centralizing the infrastructure while distributing the innovation.

The 4 Pillars of the AI Studio

To manage this transition effectively, the Studio must support the full spectrum of AI maturity. It is not enough to just manage prompts; you must manage the evolution of agency. For every pillar of infrastructure, there is a distinct tiered approach as you move from Low Agency (Chatbots) to Mid Agency (Workflows) to High Agency (Autonomous Agents).

1. The Library (Standardization)

The Library is where institutional knowledge becomes code. We stop letting every employee reinvent the wheel and start treating our best processes as software assets.

Low Agency (The Golden Prompt): At the chatbot level, the Library hosts version-controlled “Golden Prompts.” Instead of 50 employees writing their own (flawed) meeting summary prompts, they all use

meet_sum_v4, which has been vetted for accuracy and tone.Mid Agency (The Workflow Chain): As we move to Agentic Workflows, the Library stores “Chains”—sequences of prompts that execute a specific process. For example, the “Quarterly Report Generator” is not just one prompt; it is a stored recipe that first retrieves data, then outlines the narrative, and finally generates the text. The human triggers it, but the structure is standardized.

High Agency (The Agent Definition): At the highest level, the Library acts as the repository for “Agent Definitions”—conf files that define the identity, permissions, and tool access of a “Procurement Agent.” This ensures that the agent follows the same strict logic path regarding spending limits, regardless of which department deploys it.

2. The Sandbox (Experimentation)

You cannot build safe agents on live customer data. The Sandbox provides a gradient of safety corresponding to the risk of the system.

Low Agency (The Text Gym): For chatbots, the Sandbox is a “Text Gym”—a place to test prompts against static documents to ensure they don’t hallucinate. We check if the bot can accurately summarize a PDF without inventing facts.

Mid Agency (The Integration Lab): For workflows, we need to test logic and connectivity. This is a read-only environment where the system can query your database to “practice” gathering information, but it is physically blocked from writing any changes. We verify clarity of thought without risk of action.

High Agency (The Digital Twin): For autonomous agents, we need a “Digital Twin.” This is a full simulation of your organization filled with synthetic data—fake customers, dummy bank accounts, and phantom inventory. Here, an agent can attempt to “process refunds” or “delete files.” If the agent goes rogue and deletes the database, it deletes the simulation, not your company.

3. The Red Team (Verification)

The Red Team is the “immune system” of the organization. Their job is to expose fragility before it becomes a liability, scaling their attacks to match the autonomy of the system.

Low Agency (Injection & Bias): When testing chatbots, the Red Team focuses on “Jailbreaking.” They try to trick the bot into revealing toxic content, competitors’ secrets, or ignoring safety guidelines through prompt injection.

Mid Agency (Logic Stress Testing): For workflows, the focus shifts to “Logic Gaps.” The Red Team analyzes the decision tree: “Why did this Hiring Workflow reject all applicants from this specific zip code?” They probe for algorithmic bias and logical dead-ends in the process design.

High Agency (Circuit Breakers): For autonomous agents, the Red Team tests for “Runaway Loops.” They simulate worst-case scenarios—what happens if the “Procurement Agent” gets stuck in a retry loop buying 10,000 staplers? They ensure that the automated “Circuit Breakers” (hard-coded budget caps and rate limits) actually trip before the infrastructure melts down.

4. The Bridge (Education)

The Bridge is the education arm, but it is not an IT seminar. It is a management training course designed to upskill the workforce to handle increasingly complex tools.

Low Agency (Prompt Literacy): At the basic level, we teach “Prompt Engineering.” Staff learn the syntax of how to ask better questions to get better answers from a static bot.

Mid Agency (Process Decomposition): For workflows, we teach “Process Design.” Employees learn how to break a complex job (writing a grant) into discrete, chainable steps that a machine can handle sequentially. They stop being writers and start being architects of their own work.

High Agency (Managerial Oversight): At the agent level, we teach “Managerial Oversight.” We train staff how to audit the behavior of a digital employee. The skill is no longer about talking to the machine; it is about reviewing the logs and “dashcam footage” of the agent’s actions to ensure it is aligned with organizational values.

Hardware Sovereignty: The Rise of Local Inference

A critical, often overlooked component of this pivot is hardware. We are seeing a massive shift toward Local Inference. Relying entirely on cloud APIs (OpenAI, Anthropic) is expensive and introduces latency and privacy risks.

The AI Studio of 2026 runs a Hybrid Stack. Heavy reasoning (complex analysis, creative strategy) happens in the cloud, utilizing the massive models of the major providers. But routine, high-volume tasks (summarization, categorization, initial drafting) run locally on dedicated hardware in the office. This is why we are seeing the explosion of “Mac Studios” in the enterprise server closet—they are the perfect, cost-effective vessel for running local models (like Llama 3 or Mistral) that keep your data within your four walls.

If data is the new oil, you need your own refinery. The Hybrid Stack ensures that you own the means of production, not just the subscription to it.

Part 5: Conclusion (The Architect’s Mindset)

The transition from 2023 to 2026 is the transition from Magic to Engineering.

In the beginning, AI felt like magic. Ideally, it should feel like magic. But magic doesn’t scale. Engineering scales. Reliability scales. Governance scales.

The “Productivity J-Curve” is daunting. The “Valley of Despair” is real. But the only way out is through. We have to stop treating AI as a “thing you buy” and start treating it as specific “work you do.”

For the CEO: Stop asking “How do we get AI?” and start asking “What structural friction can we remove with an Agent?”

For the Educator: Stop asking “How do I stop them from cheating?” and start asking “What can they build now that they have this power?”

We are done playing with tools. It is time to build the house.

Key Terms Taxonomy

Productivity J-Curve: The economic theory stating that new technologies cause an initial dip in performance due to the need for simultaneous maintenance of old systems and investment in new ones.

Zero-Knowledge Machine Learning (ZKML): A cryptographic method allowing verification of data properties without revealing the underlying data.

Renewable Assignment: An assessment designed to create value for a community beyond the classroom, utilizing AI as a scaffolding tool.

Local Inference: Running AI models on local hardware (on-premise) rather than via cloud APIs, prioritizing privacy and cost control.

I've hit this exact productivity paradox running my own autonomous agent. The agent can generate outputs 10x faster, but the oversight, verification, and integration work actually increased my cognitive load. The infrastructure to make agents truly autonomous isn't there yet - we're in the 'Ferrari with a broken engine' phase. The gap between what demos promise and what production delivers is massive. What I've found works better than progressive autonomy is clear escalation rules. 'You can modify dev, but prod needs approval' is clearer than 'use your judgment.' Has anyone figured out how to measure the oversight burden versus the productivity gain?